The “mind” of any trendy smartphone is its SoC (system-on-chip), an extremely advanced micromachine. It packs a CPU and GPU, audio and video processing, wi-fi communication, and energy administration on a bit of silicon the scale of a fingernail. SoCs are getting much more refined. One current addition is the NPU, which facilitates AI capabilities.

Your telephone possible has an NPU if it is a current mannequin. Even the low-cost Samsung Galaxy A25, one of many greatest finances Android telephones, consists of one on its Exynos 1280 chip. However what does the NPU do, and what distinction does it make? Let’s discover.

Associated

An agent of the human will, an amplifier of human cognition. Uncover the facility of generative AI

What’s an NPU, and which telephones have one?

NPUs have been round longer than you suppose

NPU stands for neural processing unit. It’s a computing module in a smartphone’s SoC, just like the CPU (central processing unit) and GPU (graphics processing unit). Latest Snapdragon, Exynos, Dimensity, Apple A-series mannequin SoCs, and a few desktop and cellular PC processors by Intel, AMD, and Apple have NPUs.

Telephone SoCs have had NPUs for some time. Qualcomm has had its AI Engine (PDF), a mixture of {hardware} and software program for AI duties, on the Snapdragon 820 since 2015. Apple launched its Neural Engine NPU in 2017 with the A11 Bionic chip. Nonetheless, as unfinished as they might be, they’re extra related as we speak because of the hype surrounding AI and the options it brings.

Associated

Within the AI period, Google, Samsung, and Apple have made us all beta testers

Say goodbye to completed software program

Why do we want NPUs in telephones?

The job of an NPU is to speed up duties associated to synthetic intelligence and machine studying purposes. Examples (a number of pictured above) embrace figuring out folks and objects in photos, textual content and picture era, changing speech to textual content, real-time translation, and predicting the subsequent phrase you might wish to sort.

You do not want an NPU to carry out these capabilities, but it surely makes the method sooner, extra energy-efficient, and fewer reliant on cloud computing. The computations required by AI duties are so particular that optimizing a processing unit for these is smart.

When you’re curious and love math, A.C.C. Coolen at King’s School London dives deep into the arithmetic of neural networks. Additionally, Michael Stevens of Vsauce fame demonstrates a working neural community in his YouTube video under. Discover how fundamental however quite a few operations are executed concurrently for the community to work.

CPU vs. NPU: How are they totally different?

Supply: Oneplus through Weibo

The CPU is a general-purpose unit that may do one or just a few advanced math operations quick and with excessive precision. Nonetheless, AI jobs require many calculations to be run in parallel, whereas precision is not as essential. A GPU can be a greater match for the duty than a CPU, due to its parallel nature. Nonetheless, an NPU excels due to its effectivity, as IBM factors out. An NPU can ship related AI efficiency whereas utilizing a fraction of the vitality, which makes it very best for cellular, battery-powered units.

The advantages of on-device AI

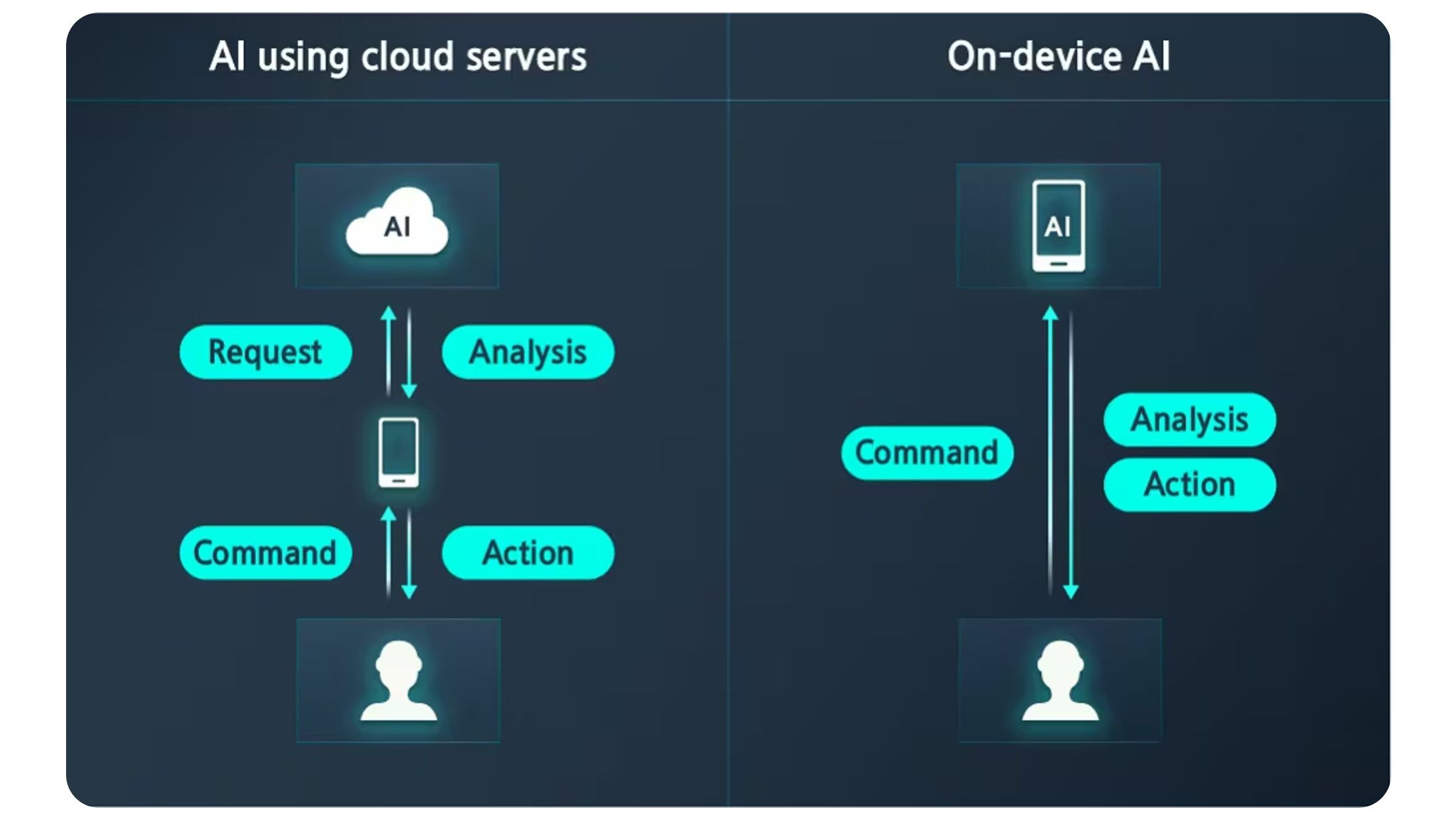

Supply: Samsung

One other benefit of getting an NPU as a part of the SoC is that it performs some AI operations on the gadget as a substitute of within the cloud, which might be slower. This is smart for lighter masses like speech-to-text conversion. It is also very best when sensor enter is concerned and fast outcomes are anticipated, equivalent to detecting objects in a scene within the digital camera app. The AI mannequin is the code that processes your enter and is saved regionally. Functions just like the Google Pixel Studio picture generator use a hybrid method, leveraging native and cloud AI fashions.

On-device AI can be nice for privateness. The private knowledge you present (in speech, textual content, or video kind) doesn’t want to depart your telephone. This eliminates the prospect of dangerous actors accessing it in an information breach.

How is a Google Pixel’s TPU totally different?

When you take a look at the specs web page for the Google Pixel 9, a telephone with closely promoted AI options, you will not discover any point out of an NPU. That is as a result of it makes use of a TPU (tensor processing unit).

Like an NPU, the TPU accelerates AI calculations. What’s totally different is that TPUs and TPU chips are custom-designed by Google. You will discover them solely in Google {hardware} and the corporate’s knowledge facilities. Tensor processing items are optimized for TensorFlow, an open supply software program library developed by Google and made for machine studying and AI purposes.

What are NPU TOPS?

Like horsepower, however for NPU chips

Whereas most new telephones have an NPU, some carry out AI computations sooner. TOPS (trillions of operations per second) is the frequent measurement of AI processor efficiency. Qualcomm explains that two elements decide an NPU’s TOPS: the frequency (clock pace) at which it runs and the variety of MAC operation items at its disposal.

The not too long ago introduced Snapdragon 8 Elite chip is touted as having 45% higher AI efficiency than its predecessor, the Snapdragon 8 Gen 3. As for the latter, it tops out at 45 TOPS. That is not a lot subsequent to the 1,300+ TOPS delivered by the high-end Nvidia RTX 4090 desktop graphics card. Then once more, a telephone does not suck 450 watts like Nvidia’s beast.

It is tough to place the numbers into context since TOPS necessities for AI duties are hardly ever talked about. Nonetheless, Microsoft’s Copilot+ AI chatbot requires a minimal of 40 TOPS.

Are NPUs right here to remain?

Given their extremely specialised nature, neural processing items will not be on monitor to exchange CPUs or GPUs. As an alternative, they’re meant to enhance the effectivity of cellular SoCs by taking up AI duties whereas saving battery energy. With AI changing into built-in into smartphones, we’ll hear extra about NPUs sooner or later. For now, take a look at our favourite Samsung Galaxy AI options to discover what AI can do for you as we speak.